The Humble Neuron and the Giant Machines Built From It

A short exploration of how simple biological neurons inspired the massive neural networks powering modern AI, and why this tiny idea still shapes today’s biggest technologies.

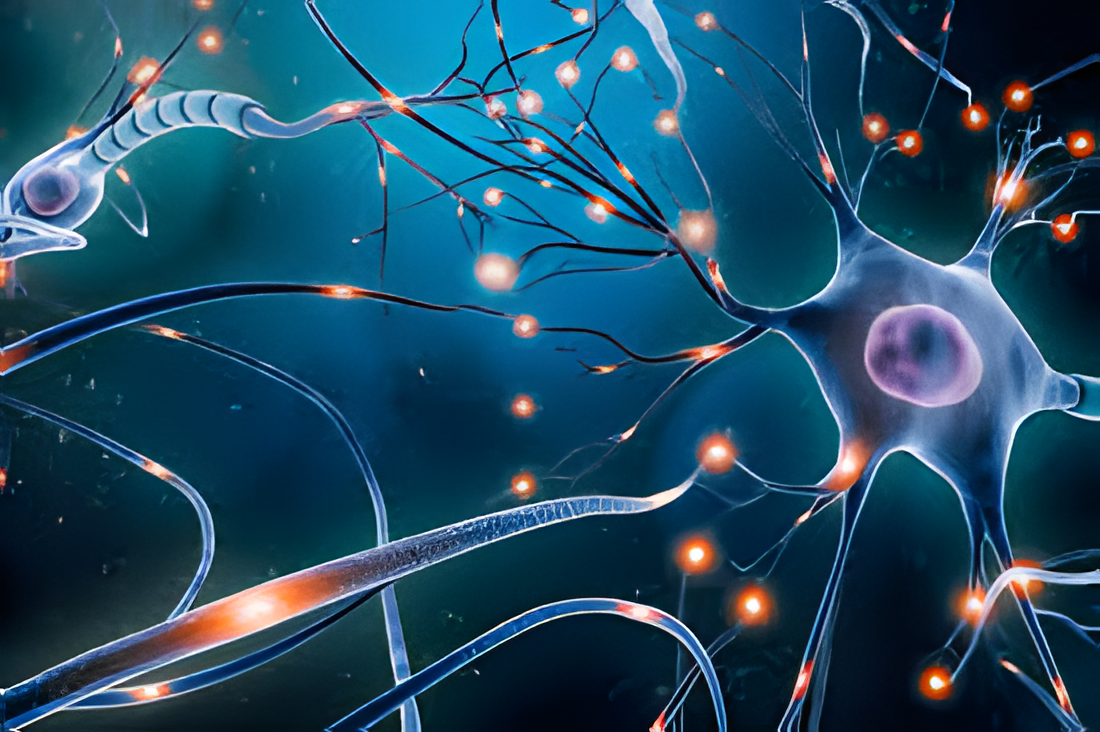

The Tiny Cell Behind Every Thought

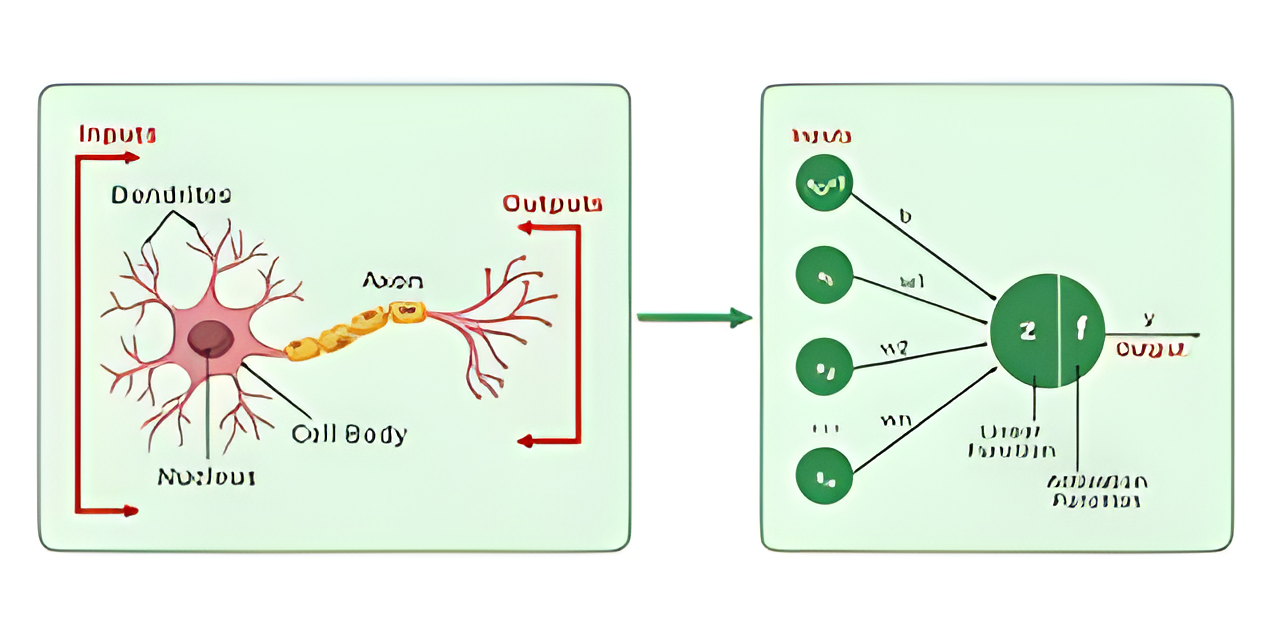

I’ve been thinking a lot about neurons lately. Not the deep-learning kind at first—the real biological ones. Those tiny, spiky cells quietly passing electrical whispers to each other inside the brain. A single neuron doesn’t look like much. It waits, listens, collects signals from its neighbors, and fires only when everything lines up. It’s simple, fragile, and strangely elegant.

The Cartoon Version of Nature

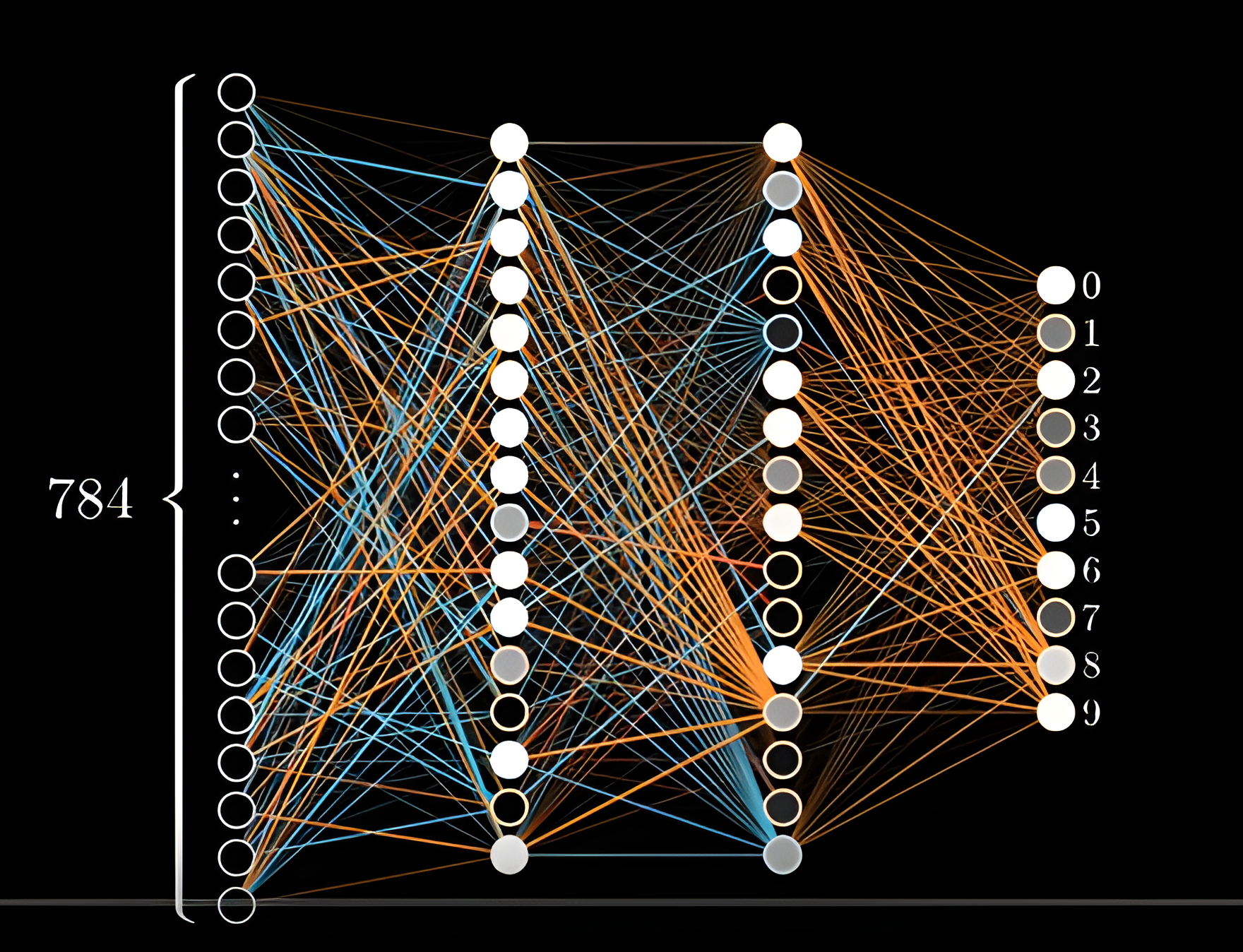

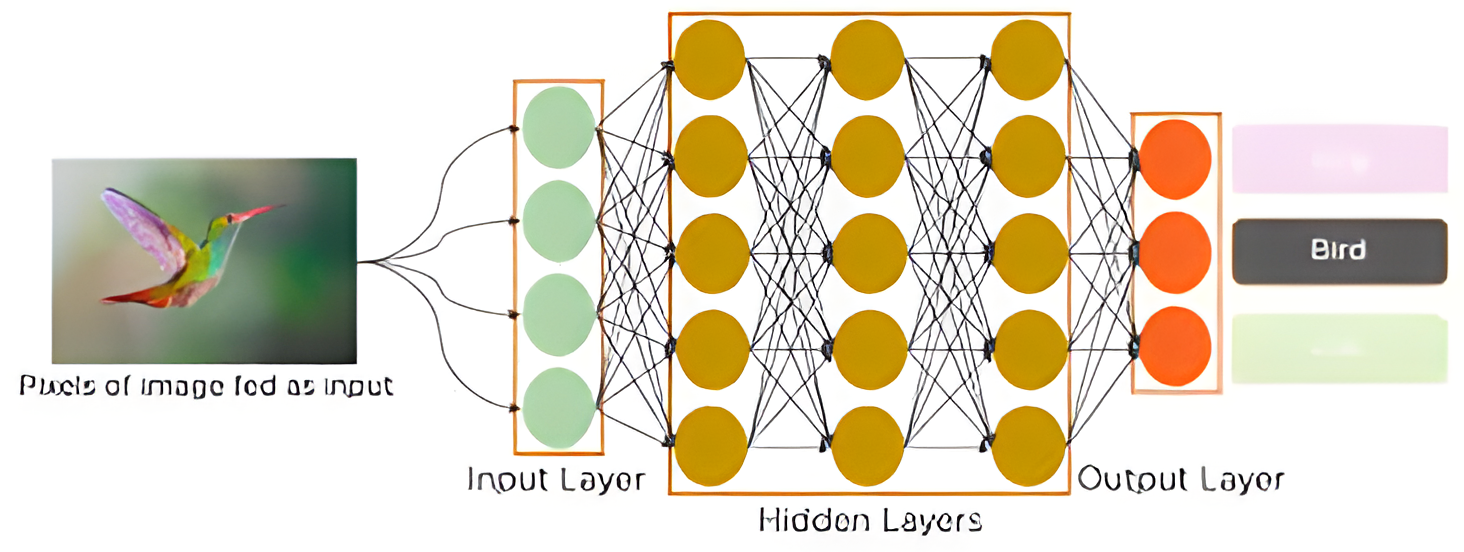

Now jump to artificial neural networks. They’re inspired by that biological idea, but they’re basically the cartoon version. An artificial “neuron” just takes numbers, multiplies them by some weights, adds them up, and runs the result through an activation function. One neuron on its own is boring. It can’t recognize a cat or write a sentence or predict anything meaningful.

When Simple Math Starts Behaving Like Intelligence

But when you chain thousands, millions, or even billions of these little mathematical units together, something familiar starts to happen: the whole becomes more than the parts. The network begins to notice shapes, languages, relationships, patterns—things it was never explicitly taught to look for. Suddenly, you have a model that can translate languages, write stories, detect diseases, or recommend songs.

How Small Things Become Powerful

What fascinates me is how something so small ends up building something so powerful. The human brain has around 86 billion of these cells. None of them are particularly smart on their own. But when you connect them in the right ways, patterns appear. Thoughts appear. Creativity appears. A person appears. Intelligence isn’t sitting inside one neuron—it emerges from millions of tiny decisions happening at once.

The Magic of Big Ideas Built From Small Steps

That gap between the tiny and the massive is what I find beautiful. Huge AI systems, some with hundreds of billions of parameters are still built on top of this tiny idea. Layers of simple units, passing signals forward, adjusting themselves little by little, learning from data the same way biological neurons strengthen or weaken connections as we experience the world. here’s something grounding about that. Modern AI feels huge and intimidating from the outside, but at its core, it’s still built on the simplest pattern: combine inputs, make a decision, pass it on. Just like a neuron. It reminds me that learning in human or machine is never a giant leap. It’s lots of tiny steps, connected together, forming something bigger than you expected. And that’s the part that keeps me excited about being in this field. Behind every massive, world-shaping model is a tiny, humble idea that started it all.